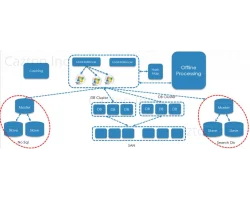

Cazton, a trusted name in technology solutions, takes pride in its team of Enterprise Search experts, boasting years of hands-on experience across a spectrum of enterprise search technologies. From the robust Azure AI Search to industry stalwarts like Apache SOLR, ElasticSearch, ELK Stack, Lucidworks Fusion, and Apache Ignite, our team excels in providing unparalleled expertise. Our collective knowledge, spanning architects, consultants, and developers, empowers us to design and build custom applications tailored to your unique needs. Whether you're grappling with scalability issues, deciphering hardware requirements, or seeking best practices and architectural design patterns, our experts at Cazton are poised to guide you through the intricacies of enterprise search technologies.

In addition to our Enterprise Search prowess, Cazton extends its expertise into Vector Search and AI, ensuring that your search solutions are not only efficient but also at the forefront of technological advancements. In the realm of Vector Search, our team navigates the complexities of algorithms that interpret relationships between data points, delivering more contextually relevant results. Furthermore, with a focus on AI integration, we harness the power of machine learning and LLMs to enhance search capabilities, providing intelligent and adaptive solutions that evolve with your data. Cazton's commitment to excellence is evident in our comprehensive suite of services, including expert consulting, training, recruiting, and tailored software solutions, ensuring that your enterprise search strategy aligns seamlessly with your business objectives. Contact us now to unlock the full potential of your search solutions with Cazton's expertise and innovation.

UX Consulting

Azure AI Search

Unleash powerful search capabilities with Azure AI SearchSOLR

Optimize search experiences through SOLR proficiencyElasticsearch

Unleash efficient data retrievalAzure Cosmos DB

All you need to knowRedis

Enhance performance with Redis expertiseMicrosoft Fabric

Implementing scalable and reliable distributed applications with Microsoft FabricDatabricks

Unlocking the potential of Databricks for data analyticsMongoDB

Empowering applications with MongoDB's flexibilityBig Data

Navigating and leveraging immense data landscapesApache Spark

Empowering data-driven decisions with proficiencyHadoop

Maximizing potential with its expansive capabilitiesKafka

Streamlining data processing with expertiseSpark.NET

Uniting Spark's power with the versatility of .NETPolyglot Persistence

Optimize data storage across diverse platformsPostGres

Leverage Postgres for scalable, robust databasesSQL Server

Maximize efficiency and performance with SQL Server expertiseApache Ignite

Accelerate applications with Apache Ignite's speedCassandra

Implement scalable and resilient solutions with Cassandra